Historic narratives

History is shaped by those who control what gets recorded.

What we study as “fact” is usually a curated version of reality, shaped to serve particular narratives. While the degree of distortion may have changed over time, it has never disappeared entirely. Some stories are amplified. Others are erased. And omission often matters just as much as misinformation.

That same pattern is now repeating itself in AI.

Censorship: From Archives to Training Data

AI systems are trained on data. And whoever controls that data controls the version of reality the model learns.

When we talk about censorship in AI, we usually think about blocked answers or safety filters. But a deeper form of censorship happens earlier: deciding what data is included at all, and what never makes it in.

AI models don’t know what they were never shown.

This isn’t hypothetical. Models from major AI companies rely heavily on English web data, creating systematic knowledge deficits in non-Western languages. Research from Stanford shows that many non-English languages are underrepresented in training data, causing models to miss cultural meaning entirely. The models work, but only within a narrow epistemic boundary.

The Kellogg Problem

Training is upbringing. Data is environment. Omission is as powerful as misinformation.

The term Kellogg Problem comes from a radical experiment in the 1930s. Psychologist Winthrop Kellogg and his wife Luella raised their infant son, Donald, alongside a young chimpanzee named Gua. The goal was to test how much behavior and cognition were shaped by environment - nature versus nurture.

The experiment is remembered less for its success than for its outcome. The chimp picked up some human-like behaviors, but the child’s language development began to slow. The shared environment didn’t elevate the chimp. It constrained the child. The experiment was stopped early for its serious ethical issues and scientific limitations.

The lesson wasn’t about intelligence. It was about limits imposed by environment.

AI systems are no different.

What an AI isn’t trained on doesn’t just disappear. It becomes a structural blind spot. And when these systems encounter those gaps, especially at scale, the consequences aren’t always obvious. Sometimes they’re subtle. Sometimes they’re harmful.

This broader pattern can be thought of as the upbringing problem in AI: when a system with generalizing potential is raised inside a constrained environment, it doesn’t become neutral or objective. It becomes selectively blind.

That is the Kellogg Problem, applied to machines.

Back to the Future: Who Decides What AI Knows?

If history has taught us anything, it’s that control over knowledge production shapes reality itself. As AI becomes the interface through which people learn, ask questions, and make decisions, the question is no longer whether these systems will shape worldviews, but who gets to raise them.

How humans acquire knowledge has shifted over time:

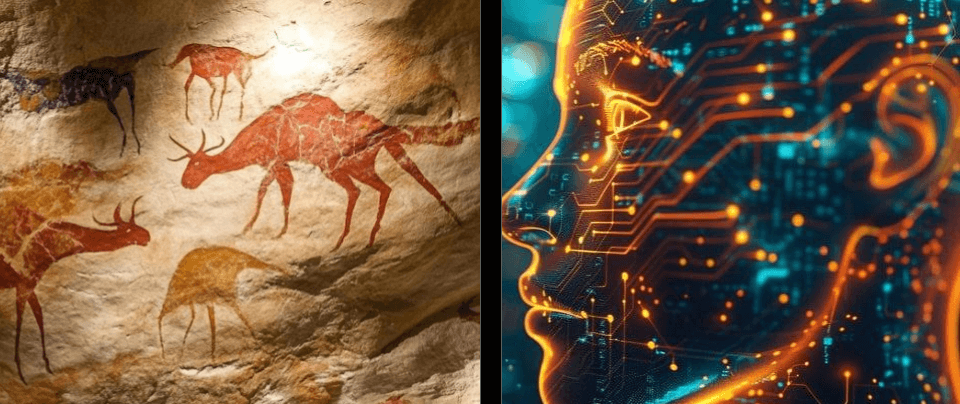

- Cave paintings - knowledge as survival memory

- Masters in citadels - knowledge as authority

- Books at your disposal - knowledge as literacy

- Encyclopedias - knowledge as reference

- Google and Wikipedia - knowledge as retrieval

- Large language models and chatbots - knowledge as interface

Cave paintings (~40,000 years ago) → Citadels (~5,000 years ago) → Books (~600 years ago) → Encyclopedias (~300 years ago) → Search engines (~30 years ago) → AI interfaces (last 5 years)

From tens of thousands of years between shifts to just five. Now compress that entire arc into a single interface.

In just five years, AI became our primary knowledge interface. Training decisions are now decisive.

This is why openness in AI: open research, open models, open datasets, and open evaluation isn’t just a philosophical preference. When a small number of organizations control how models are trained, they don’t just shape products; they shape the epistemic boundaries of the systems people rely on.

We can already see this playing out. China explicitly embeds censorship into AI regulation. Research on the DeepSeek-R1 found that prompts including trigger terms (e.g. Tibet) degraded performance and refusal behaviors - embedded censorship mechanisms tied to politically sensitive topics. Western companies frame similar choices as ‘content policy’ or ‘safety guidelines.’ This isn’t a bug. It’s a predictable outcome of who controls the design.

These choices are rarely framed as power. They’re framed as safety, optimization, or progress toward “better” intelligence. But as history shows, concentrated control over knowledge production has always come with blind spots. Yes, open models too carry risks. But concentrated control carries a different, more insidious risk: the normalization of epistemic boundaries set by a handful of organizations. The question isn’t whether to have safeguards, but who decides what constitutes a safeguard versus what constitutes censorship.

If AI systems mediate how reality is explained to us, then who raises them, and under what constraints, may matter as much as the intelligence we build.